“You’re absolutely right! That was a children’s hospital, not a military base. Let’s try that again!”

Actually it’s so they have plausible deniability if they “accidentally” kill a bunch of people that just so happens to be a group they openly despise.

I think that’s way way worse but

I would assume that Anthropic’s stance is mostly performative. But while people are in boycotting mood they could solve the surveillance problem by quitting ALL big tech products. Here’s our site that lists all the ethical, non-spyware alternatives:

https://www.rebeltechalliance.org/stopusingbigtech.html

(Please share with your friends and family - we have zero marketing budget - thank you!)

This site is amazing! Simple and very informative and I really liked the privacy policy last sentence.

Thank you! Any ideas you have about how we can get the word out are very welcome…

Hm. Meaby make a neocites website but it’s more like indiewebdev community so im not sure about that but meaby there. No clue sorry but keep it up!

Yeah instead of arguing over whether Anthropic is actually good, let’s unite around “fuck OpenAI.”

Dude the only guardrails are

-

No fully automated killings

-

No mass surveillance

You could literally do anything else, you could automate killing people with a person approving.

Trump booted anthropic because they couldn’t lift these two guardrails. Fuck me

-

From OpenAI’s statement:

We have three main red lines that guide our work with the DoW, which are generally shared by several other frontier labs:

• No use of OpenAI technology for mass domestic surveillance.

• No use of OpenAI technology to direct autonomous weapons systems.

• No use of OpenAI technology for high-stakes automated decisions (e.g. systems such as “social credit”).

It specifically states their AI can’t/won’t be used for surveillance and autonomous weapons. Of course I’m not saying I trust them, but isn’t this the same thing Anthropic says they’re against? What’s the difference here or what did I miss?

Anthropic put clauses in that were legally enforceable by future administrations. OpenAI says “yea we totally trust you bro”

Sam Altman is the king of the trust me bro and than backpedaling on it.

the ‘no domestic surveillance’ is just language that mirrors some limitations (from their pov) from the patriot act. They’re still willing to surveil people outside the USA, and in fact all they have to do is route domestic traffic through an international part of a network and they can legally spy on domestic americans which is what already happens.

They went from standing with Anthropic to throwing them under the bus real fast

About half a day.

They probably have been working on a potential agreement with openai for a while now. They just hastily finished it in response to anthropic. But I don’t know if they will keep the red lines anthropic has demanded in place

They won’t.

The red line is the amount of cash they are ready to compromise for.

$$$

Which they badly need, they are in an incredibly risky position right now. It’s very disappointing, this deal might save them from collapse for quite a while.

The only disappointment, is that Altmans head is still attached to his shoulders.

No, no, even if we get that wish I dont want the US state propping up AI longer

Altman is a symptom, not the problem. The problem is capitalism.

That’s very true; I still would love to see him guillotined.

Yeah but its interesting that all of these big tech guys are so creepy. Altman, Musk, Zuckerberg… Do they grow them in labs?

Capitalism rewards sociopathy to a great degree. The less you care about fellow humans, the more you are willing to exploit them.

It was always about the money.

I’m wondering if this is a play for a future bailout. OpenAI knows they are fucked; and instead of just going away like most companies do when they fail, they are embedding themselves in the government to secure a bailout under the guise of a critical defence vendor.

Furthermore, I’m not convinced the researchers and critical personnel will work for a company that does this. I think we’re about to see the biggest jumping of a ship so far in the industry.

That makes a lot of sense

Just the little push I needed to close my ChatGPT account

Same! Was planning on doing this today.

What do you plan to switch to? I’m currently thinking a combination of Claude and something else for images if it turns out I really need to pay for it.

deleted by creator

mainstream

I’ll believe that when my sisters start saying this. Till then, it’s just us privacy fans screaming in a dark cave, enjoying the echo.

It’s always like this. We get a ton of articles on how everyone is suddenly boycotting/deleting [insert thing] but when you ask someone in real life, they usually have no idea what you’re talking about.

so explain it to them gently. you won’t reach everyone, but you’ll reach more people than accepting this status quo

whoosh

Nah

Yah

The one thing I will say is that there does seem to be a generalized dislike for AI that has all the investors and upper management types nervous. Even by their own studies do people generally either not care about AI in their products or actively dislike it/find it intrusive. There was a study by a phone company from this past summer or fall that concluded that 80% of their users had no interest in AI or found that it actively made their experience worse, and there have been plenty of pretty damning reports about how useful it’s been in various industries (just look at Microslop). That is not conducive to convincing investors to fund your product and does not show a viable path to making a profit in the future.

We’ve seen similar things happening recently with car manufacturers walking back on their big touchscreens (with some help from regulation in civilized places that care about things like “pedestrian fatalities” - like Europe) due to consumer sentiment. They tried for nearly a decade to push bigger and bigger screens into cars and remove physical buttons, and now they’re moving in the other direction. Completely anecdotal evidence, but the last time I went to buy a car I told the salesman at the dealership that I wasn’t interested in cars newer than a certain year because that was when they increased the size of the screen and put them in a more obnoxious spot on the dashboard, and he said that he heard similar sentiments from practically everybody who came in looking to buy a car - everybody hated the bigger screens.

I had a coworker tell me how cool Copilot was because he asked it a question and it found the answer in an email in his outlook mailbox. I thought, “you needed AI to search your email?”

We are probably cooked.

I know what you mean. It’s a pretty vague term though. You could argue that as soon as it enters the midsection of the bell curve at all, it’s “in the mainstream.” It doesn’t have to have captured a full 90% of the bell curve.

Since this article, Anthropic’s Claude AI app has claimed the #1 top spot over ChatGPT on both Android and iOS.

which is weird because they are not any good either

No AI company is, but they’re better than OpenAI in this.

I bought Claude premium. I’m not rich enough for $28 CAD a month tho so im only doing one month lol

Windows Central shouldn’t be parroting the U.S. government in mislabeling the Department of Defense.

I mean it’s at least accurate now, there is no defense when you are starting war with everyone

But MAGA only voted for the department of pedophiles. This is an outrage.

Its like it joined a cult and got a new name.

Especially since the Trump admin already made it clear that they don’t respect preferred pronouns. Why should we use the DoD’s preferred pronouns of Department of War instead of the Department of Defense name it legally has? DoW is just DoD’s preferred pronoun.

Yeah, there are all sorts of ways in which standing up to the administration is hard, but calling something by its actual name should be a relatively easy thing to do!

They aren’t defending shit

Windows Central should be advocating for return of Windows Phone.

I think you’re in denial. If they had changed the name of Homeland Security into the dep of National Security for example, you probably wouldn’t say that media outlets is parroting the us government.

Because it’s still officially called the Department of Defense; only Congress can rename it.

More broadly, it illustrates the administration’s use of illegal boat strikes and regime change as foreign policy tools.

I would.

Now imagine my shock when I had done the swap from ChatGPT to Claude the day before the news about Anthropic’s (now backpedalled) deal. Anyway, I deleted ChatGPT and Gemini accounts and degoogled my life while I was at it.

Amazon, gemini, perplexity are also in the us goverment baby bottle nipples.

The “Cancel ChatGPT movement” doesn’t appear to be mentioned in the article, but other outlets say hashtags like #CancelChatGPT are trending on X.

Anthropic still is scum for being completely fine helping America oppress the rest of the world.

Anthropic is scum, accepting money from foreign dictators, forcing their software on minorities while insisting it was conscious and had emotions just like them, praising the Trump administration, making up scary stories to get more funding…

…In many ways, they’re worse than OpenAI. They’re just running with the same playbook that Sam Altman used to use to pretend he was a good guy.

I mean they praised the Trump administration for benefiting their business, which is… fair? I guess?

If you do ask Claude Sonnet 4.6 about Trump it leans quite negative, as it should.

I missed when sucking up to the Trump administration and echoing Cold War style nationalism was “fair”. If that’s the case, OpenAI’s behavior is fair.

Fully autonomous weapons (those that take humans out of the loop entirely and automate selecting and engaging targets) may prove critical for our national defense. We have offered to work directly with the Department of War on R&D to improve the reliability of these systems.

Our strong preference is to continue to serve the Department and our warfighters

Dario “Warfighter” Amodei

I missed when sucking up to the Trump administration and echoing Cold War style nationalism was “fair”. If that’s the case, OpenAI’s behavior is fair.

It’s just capitalism. Anthropic pushed against the administration and now they are about to be branded as “supply chain risk”. OpenAI bent over and are going to get billions in funding that they sorely need (and hopefully don’t get, let them fail).

You miss the mark though: Anthropic only praised the administration, but that’s just words to give the Twitter pedo in chief a pat on the head. OpenAI actually signed a contract and they are providing their service. Massive difference.

They both signed the contract. They both allegedly hold the exact same set of red lines. One of them just gets to pretend to be the virtuous company with the virtuous capitalist CEO, despite showing tons of red flags that should have you scrambling to be as concerned about them as OpenAI.

If you read their statement, Good Guy Anthropic is totally cool with

- Mass surveillance of non-Americans

- Targeted surveillance of Americans

- Semi-autonomous bombings

- Fully autonomous bombings… in the future

- The exact same Red Scare BS that Sam Altman talks about

They literally didn’t sign the new contract and now they are getting punished for it. What are you even talking about?

According to OpenAI, there is no difference between the old contract and the new contract.

And if you read Dario Amodei’s actual words, he says his preference is to continue working with the Department of “War” and America’s “Warfighters.” You don’t have to defend a man who is this evil, and this much of pro-war Trump suckup.

They insisted Claude was human?

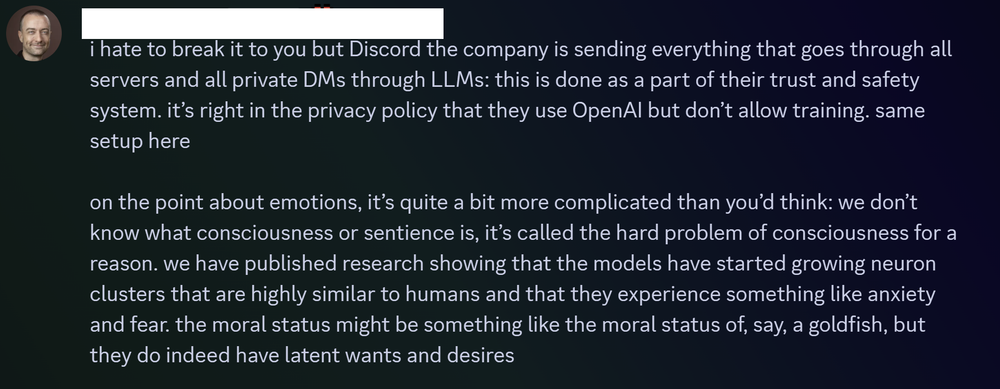

Sorry, not quite, but close. From 404 media

When users confronted Clinton with their concerns, he brushed them off, said he would not submit to mob rule, and explained that AIs have emotions and that tech firms were working to create a new form of sentience, according to Discord logs and conversations with members of the group.

Oh, that guy! To be fair, that’s one employee, not Anthropic’s actions or position. You mentioned forcing their software on minorities while insisting it was better than it was, and I was getting OLPC flashbacks. But Anthropic looking for funding in the UAE and Qatar is shitty. I can’t seem to find anything about whether or not they went through with those contracts.

Jason Clinton is Anthropic’s Deputy Chief Information Security Officer. That means Jason knew better, and he was using his position as a moderator (and supposedly a security expert) to try gaslighting a vulnerable minority into believing his favorite toy was “secure” when it was not.

I mean, I’m not gonna defend him. But fucking up a discord that you’re a mod of isn’t really in the same ballpark as taking money from dictators or directing fully autonomous strikes. Also, from the read, it really sounds like that Deputy CISO was a prime example of cyber-psychosis, or AI mania, or whatever we’ve decided to call it. And I assume he is part of the same vulnerable minority?

Every example we have of Anthropic’s behavior paints a picture of an immoral company that pretends to be moral. It’s bad enough that they continue doing harm, but then they dress it up with phrases like “AI Safety” and “Information Security”. (And every press release they create to describe how scary good their system is, tends to be followed up by a sudden cash infusion from an openly morally bankrupt company like Google or Amazon.)

I reserve zero empathy for the people on the abuser side of an abusive dynamic. Maybe Elon Musk is autistic too. I don’t really care. Only Moloch knows their hearts. I’ll judge them for their actions.